Cost × Speed × Accuracy × Scale: Rethinking Economics of AI

Rethinking the Economics of AI

The conversation around AI in the enterprise has largely been framed in the wrong way. Most discussions reduce the problem to a simple tradeoff between cost and speed, with occasional concern about accuracy. This framing is intuitive, but it misses the deeper structural shift that is underway.

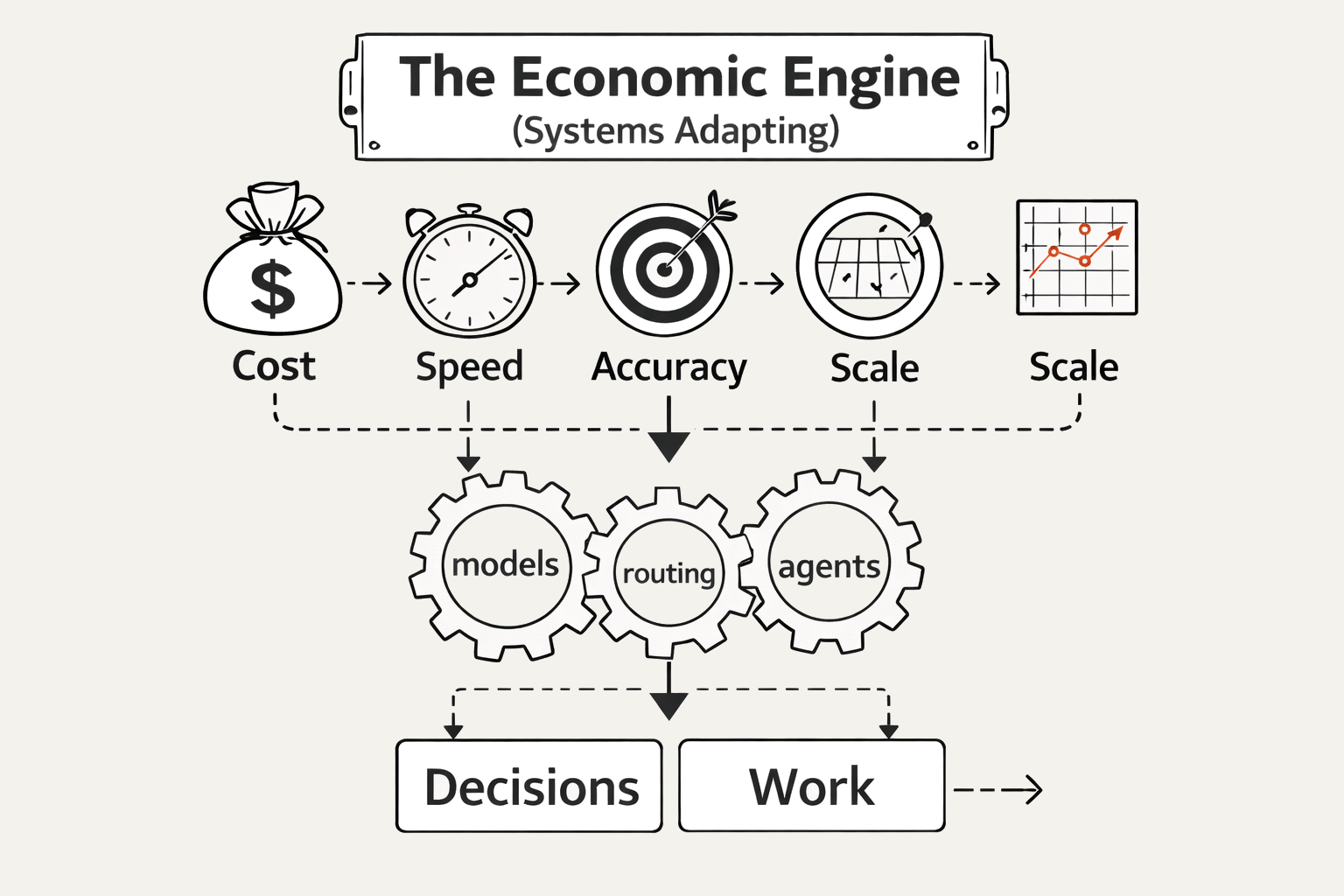

A more complete way to think about modern AI systems is through four interacting dimensions: Cost, Speed, Accuracy, and Scale. These are not independent variables. They multiply together to determine the true economic value of any AI system. When one of these dimensions is misaligned, the entire system breaks down. When they are aligned, the result is not incremental improvement but a phase change in capability and economics.

Today, most agentic AI systems are optimized for speed and scale, but they struggle with cost efficiency and long-term accuracy. This is not because the underlying models are weak. Frontier models are remarkably capable. The problem is architectural. We are deploying systems that do not learn in a durable way. As a result, they behave very differently from humans, and that difference is what drives the economic inefficiency that many companies are now observing.

A human expert does not solve the same problem repeatedly from scratch. They learn once and apply that learning many times. Their cost per outcome decreases over time because their knowledge compounds. In contrast, most AI agents today operate in a stateless manner. They rely on prompts, retrieval, and context windows to simulate knowledge. Each task requires reconstructing the reasoning process again and again. This leads to repeated token usage, repeated errors, and repeated corrections. Over time, especially in long-running workflows, the cost begins to approach or even exceed human labor.

The Hidden Failure of Stateless AI

One of the clearest signals that the industry is at an inflection point comes from Deloitte’s latest State of AI in the Enterprise report - https://www.deloitte.com/us/en/what-we-do/capabilities/applied-artificial-intelligence/content/state-of-ai-in-the-enterprise.html

The data reveals a paradox. AI adoption is accelerating rapidly across organizations, with access expanding to a majority of the workforce and agentic systems expected to scale significantly in the coming years. Yet despite this momentum, real business impact remains limited. Only a small fraction of companies are seeing meaningful revenue growth from AI, and fewer than half are transforming their operations in a fundamental way.

This gap between adoption and value is the most important signal in the market today. It suggests that the limiting factor is no longer model capability or access to technology. Instead, it is how these systems are being used. Most organizations are layering AI onto existing workflows rather than redesigning those workflows around AI.

The result is predictable. Systems that are fast and scalable still fail to deliver durable value because they do not learn. They require repeated prompting, repeated retrieval, and repeated correction. They operate as stateless systems, which means every task is reconstructed rather than improved upon.

This is where economic inefficiency emerges. As usage increases, cost scales linearly or worse, while accuracy and reliability can degrade over time. The system becomes more expensive to operate even as it becomes more central to the business.

The core issue is that current agent systems are built on non-durable memory. Context windows and retrieval systems are not learning substrates. They are temporary scaffolding. They allow the model to access information, but they do not allow it to internalize that information. As a result, every improvement is transient. Every correction must be reapplied. Every piece of expertise must be reintroduced into the system repeatedly.

This creates what can be described as an economic ceiling for stateless agents. As tasks become more complex and workflows extend over longer horizons, the cost grows nonlinearly. Accuracy declines due to context dilution and interference. The system becomes brittle. Human oversight increases. The promise of automation begins to erode. The path forward is not simply better prompting or larger context windows. It is not just cheaper tokens. The real shift is from stateless inference to stateful learning.

This is the direction that LatentSpin represents. The idea is simple but powerful. Instead of treating each execution as an isolated event, the system learns from execution itself. Feedback, corrections, and expert input are converted into durable updates. The model does not just simulate understanding. It acquires it. When this happens, the entire economic equation changes.

Cost begins to decrease over time because the system no longer needs to reconstruct decisions. Speed remains high because inference is still fast. Accuracy improves because the system internalizes constraints, rules, and judgment patterns. Scale becomes a force multiplier rather than a cost amplifier because each additional execution benefits from prior learning. In other words, the system starts to behave like a human expert, but at machine scale.

This is a fundamentally different paradigm. Instead of optimizing token usage within a static system, the system itself evolves. Instead of externalizing knowledge into prompts and retrieval layers, knowledge becomes embedded in the model. Instead of relying on repeated correction, the system preserves improvements. This shift has profound implications for the broader AI landscape, particularly in relation to the current concentration of power among frontier model providers.

Today, there is a growing concern that AI value is being captured primarily by a small number of companies that control the most advanced models. This creates a form of frontier monopoly. Organizations depend on these models for intelligence, but they struggle to differentiate because the underlying capability is shared. The result is a race to build agents and applications on top of the same foundation, often leading to similar limitations and similar cost structures.

Stateless to Compounding Intelligence

The LatentSpin approach challenges this dynamic by shifting value creation from the model itself to the learning process around the model.

If organizations can teach models based on their own workflows, expertise, and data, then intelligence becomes localized and proprietary. The model is no longer just a powerful, but generic reasoning engine. It becomes a specialized system that reflects the unique knowledge of a company or individual. This breaks the dependency on static model capabilities and allows value to accumulate at the edge.

In this world, the competitive advantage is not who has access to the best base model. It is who can most effectively convert real-world experience into durable intelligence. It is who can create systems that learn continuously and compound over time.

From an economic perspective, this is critical. If AI remains a stateless, token-driven system, then cost will always be tied to usage. Efficiency gains will be limited. Organizations will constantly balance the benefits of speed and scale against the burden of cost. In contrast, if AI becomes a learning system, then cost per outcome can decrease over time. The system becomes more efficient as it is used more, not less.

This aligns directly with the Cost × Speed × Accuracy × Scale framework. A system that improves along all four dimensions simultaneously is not just better. It is structurally superior. It enables new categories of applications that were previously uneconomical. It allows organizations to tackle complex, high-value workflows that require both precision and adaptability.

It also changes the role of humans in the system. Instead of being continuous operators and correctors, humans become teachers. Their expertise is captured once and reused indefinitely. This shifts effort from execution to knowledge transfer. It turns human insight into a compounding asset.

Breaking the Frontier Monopoly

In many ways, this is the natural evolution of software. Software historically allowed us to encode deterministic logic. AI allows us to encode probabilistic reasoning. The next step is to encode adaptive intelligence that evolves with use.

The current generation of agentic systems has revealed both the potential and the limitations of this approach. They have shown that speed and scale are no longer the primary constraints. The challenge now is efficiency and durability. The systems that solve this will define the next era of enterprise AI.

The conclusion is straightforward but important. The future of AI is not just about faster models or cheaper tokens. It is about systems that learn. It is about shifting from stateless execution to compounding intelligence. It is about aligning cost, speed, accuracy, and scale in a way that creates sustainable value.

When agents begin to behave like humans economically, learning from experience and improving over time, but operate at machine scale, the entire equation changes. The economic ceiling that exists today disappears. The frontier monopoly weakens. And the power to create value moves into the hands of those who can teach their systems best.