The Three Modes of Continuous Learning Agents

Agents Don’t Learn and That’s becoming a Problem

The conversation around AI agents has rapidly evolved over the past year. Early excitement focused on autonomy, with the belief that once models became powerful enough, they would be able to operate independently across complex business workflows. While progress has been significant, a deeper limitation has emerged. The challenge is not just intelligence. It is learning. More specifically, it is the absence of continuous learning during real-world execution.

Most agent systems today rely on static behavior. They depend on prompts, retrieval, and orchestration layers that simulate adaptability but do not truly evolve. These systems assume that relevant knowledge can be injected at runtime through context windows. However, enterprise knowledge is not static or universally available. It is proprietary, dynamic, and often embedded in the decisions and workflows of experienced operators. This knowledge does not exist in frontier models and cannot be fully captured through retrieval alone.

As context grows to accommodate more information, it becomes increasingly difficult to manage. Signal degrades, relevance decays, and agents lose the ability to reason effectively across long-running tasks. When conditions change, humans must intervene, reconfigure, or retrain. This creates fragility and prevents agents from becoming long-term, compounding assets inside organizations.

A new methodology is emerging that reframes how agents are built and deployed. Instead of treating learning as something that happens offline, this approach embeds learning directly into execution. Knowledge workers do not train models in the traditional sense. They teach them through real work. Over time, this teaching accumulates into durable capability. Agents improve not because they were retrained in isolation by ML or AI experts, but because they participated in actual workflows and learned from them.

From Training Models to Teaching Agents via Work

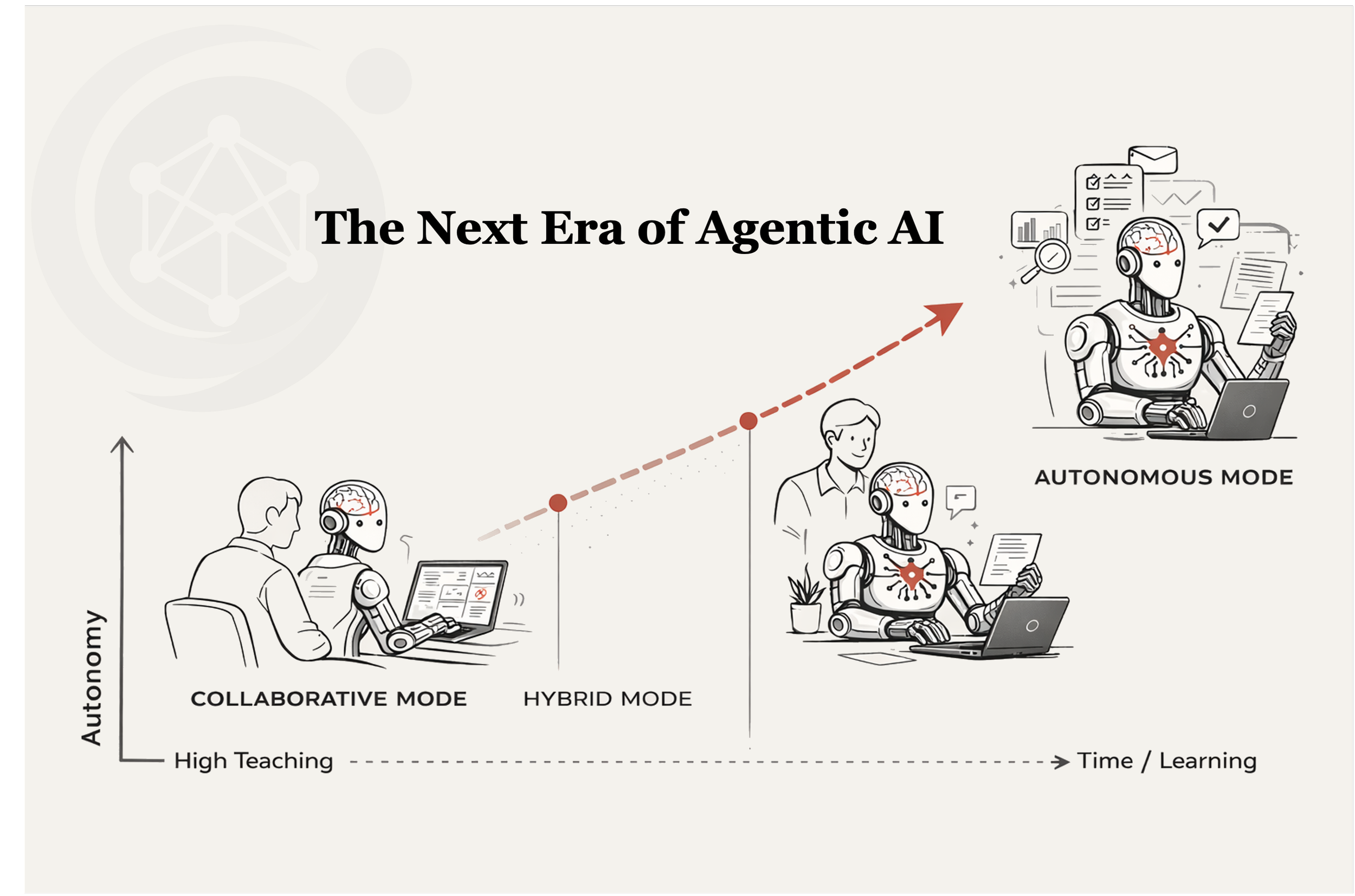

This shift enables what can be described as three distinct modes of continuous learning agents. These modes are not separate product categories but a spectrum. They represent different ways in which humans and agents interact across time, complexity, and trust. These modes only become practical when learning is embedded directly into execution rather than dependent on static training or ever-expanding context. Understanding them is critical to understanding how continuous learning systems will be adopted in real-world environments.

The first mode is autonomous agent execution. This is the most familiar concept and often the most misunderstood. In the traditional approach, a worker’s expertise must be translated into datasets, prompts, or workflow logic by ML and AI experts before an agent can operate effectively. These systems rely on upfront model training or context engineering and struggle to adapt as conditions change.

With continuous learning systems, this model shifts. Knowledge workers can teach agents directly through real work, turning demonstrations, corrections, and decisions into durable learned behavior. In this mode, teaching is concentrated upfront so the agent can perform a specific workflow with increasing independence over time. Once the agent has reached a sufficient level of competence, it begins to operate independently.

The defining characteristic of this mode is that teaching is front-loaded, even though learning does not stop after deployment. The goal is to reach a point where the agent can execute long-running or complex tasks without constant human involvement. These tasks are typically structured around clear outcomes such as processing documents, managing operational pipelines, or executing repeatable business functions. Over time, the agent continues to improve through occasional corrections and feedback, while the majority of execution remains autonomous.

This mode is powerful because it enables scale. A single knowledge worker can effectively multiply their impact by deploying agents that operate continuously on their behalf. However, it also requires a higher degree of trust and maturity. Organizations must be confident that the agent can handle edge cases, adapt to changes, and maintain accuracy without constant supervision. For this reason, fully autonomous agents are often the end state rather than the starting point.

The second mode is collaborative or copilot execution. In this mode, the agent operates alongside the knowledge worker in real time. Instead of being deployed to run independently, the agent becomes an active participant in the workflow. The human and the agent work together, making decisions, evaluating options, and responding to changing conditions as they occur.

The defining characteristic of this mode is continuous teaching. Every interaction becomes a learning opportunity. When the agent produces an output, the knowledge worker can refine it. When a decision is made, the reasoning behind it can be captured. Over time, the agent internalizes these patterns and begins to anticipate the needs of the user. What would otherwise be transient context becomes persistent capability.

This mode is particularly well suited for workflows that are dynamic, judgment-based, and sensitive to context. These are environments where decisions cannot be fully predefined and where expertise plays a critical role. Because the agent is always present and continuously learning, it can improve at a much higher frequency than in autonomous mode. This leads to faster improvement and more immediate value.

From an adoption perspective, collaborative execution is often the most effective entry point. It requires less upfront investment and carries lower risk. Knowledge workers do not need to trust the agent to operate independently from day one. Instead, they can gradually build confidence as the agent demonstrates its capabilities. At the same time, the system accumulates the learning generated through these interactions, creating a growing foundation of intelligence that compounds over time.

The third mode is hybrid or progressive autonomy. This mode represents the natural evolution from collaboration to independence. It begins with the agent operating in a copilot role, learning continuously from the knowledge worker. As the agent becomes more capable, it starts to take on larger portions of the workflow. Eventually, it transitions into handling entire tasks with minimal oversight.

The defining characteristic of this mode is the shift in teaching patterns over time. Early on, teaching is frequent and tightly coupled with execution. The knowledge worker is heavily involved in guiding the agent. As the agent improves, the need for intervention decreases. Teaching becomes more occasional and focused on edge cases or new scenarios. The agent moves from being an assistant to being a partner and eventually to being an independent operator.

This progression is critical because it aligns with how trust is built in real-world systems. Organizations are unlikely to deploy fully autonomous agents without first observing their behavior in a controlled, collaborative setting. Hybrid mode provides a pathway for this transition. It allows agents to prove themselves gradually while continuing to learn and improve.

Summary: Modes of Agent Continuous Learning

Across all three modes, a unifying concept emerges. Continuous learning agents operate across different timescales of work. In collaborative mode, learning happens at the level of seconds and minutes through rapid interactions. In hybrid mode, learning unfolds over hours and days as workflows are partially automated. In autonomous mode, learning accumulates over weeks and months as agents handle long-running processes.

What makes this possible is the ability to convert context into persistent capability. Traditional systems rely on context windows to provide relevant information at the moment of execution. However, this information is transient and decays over time. Continuous learning systems change this dynamic. They take the insights generated during execution and transform them into durable behavior that can be applied in the future.

This has profound implications for how organizations think about knowledge and expertise. Instead of being locked inside individuals or scattered across systems, knowledge becomes embedded within agents that can be reused, shared, and improved over time. The act of doing work becomes the act of building intelligence. Every interaction contributes to a growing foundation of capability that compounds in value.

The three modes of continuous learning agents are not mutually exclusive. In practice, they coexist within the same organization and often within the same workflow. A knowledge worker might collaborate with an agent on complex decisions while relying on autonomous agents to handle routine tasks. Over time, workflows shift along the spectrum as agents become more capable and trusted.

The key insight is that autonomy is not the starting point. It is the outcome of a learning process that begins with collaboration. By focusing on how agents learn during execution rather than how they perform in isolation, organizations can unlock a more scalable and resilient approach to automation.

As this paradigm continues to evolve, the distinction between human and machine work will become less rigid. Agents will not simply replace tasks. They will participate in the ongoing process of learning and adaptation that defines modern organizations. The result is a system where intelligence is not static but continuously improving, shaped by the people who use it and the work it performs.