How Agents Choose, Use, and actually Teach Models

There has been a lot of discussion about how Artificial Intelligence (AI) is changing software development and workflow automation more broadly. Most of that discussion has focused on the human side of the equation.

Which tools do developers prefer?

Which models feel the most capable?

Which interface leads to the best experience?

These are useful questions, but they may not be the most important ones going forward. Different questions are starting to emerge, driven not by how humans use AI, but by how agents operate.

What models will AI Agents prefer?

Will these AI Agents play favorites?

This is not a small shift in perspective. Humans interact with models through prompts, conversations, and interfaces. Agents interact with models through loops, tasks, retries, and systems. The shape of the interaction is fundamentally different, and that difference has implications for which models perform best in practice.

When a developer uses a model, they value clarity, explanation, and collaboration. When an agent uses a model, it values consistency, speed, and action. Humans tolerate ambiguity because they can interpret it. Agents struggle with ambiguity because they need to execute on it. Humans enjoy creativity. Agents prefer predictability. This difference alone suggests that the models that feel best to humans are not necessarily the ones that perform best for agents.

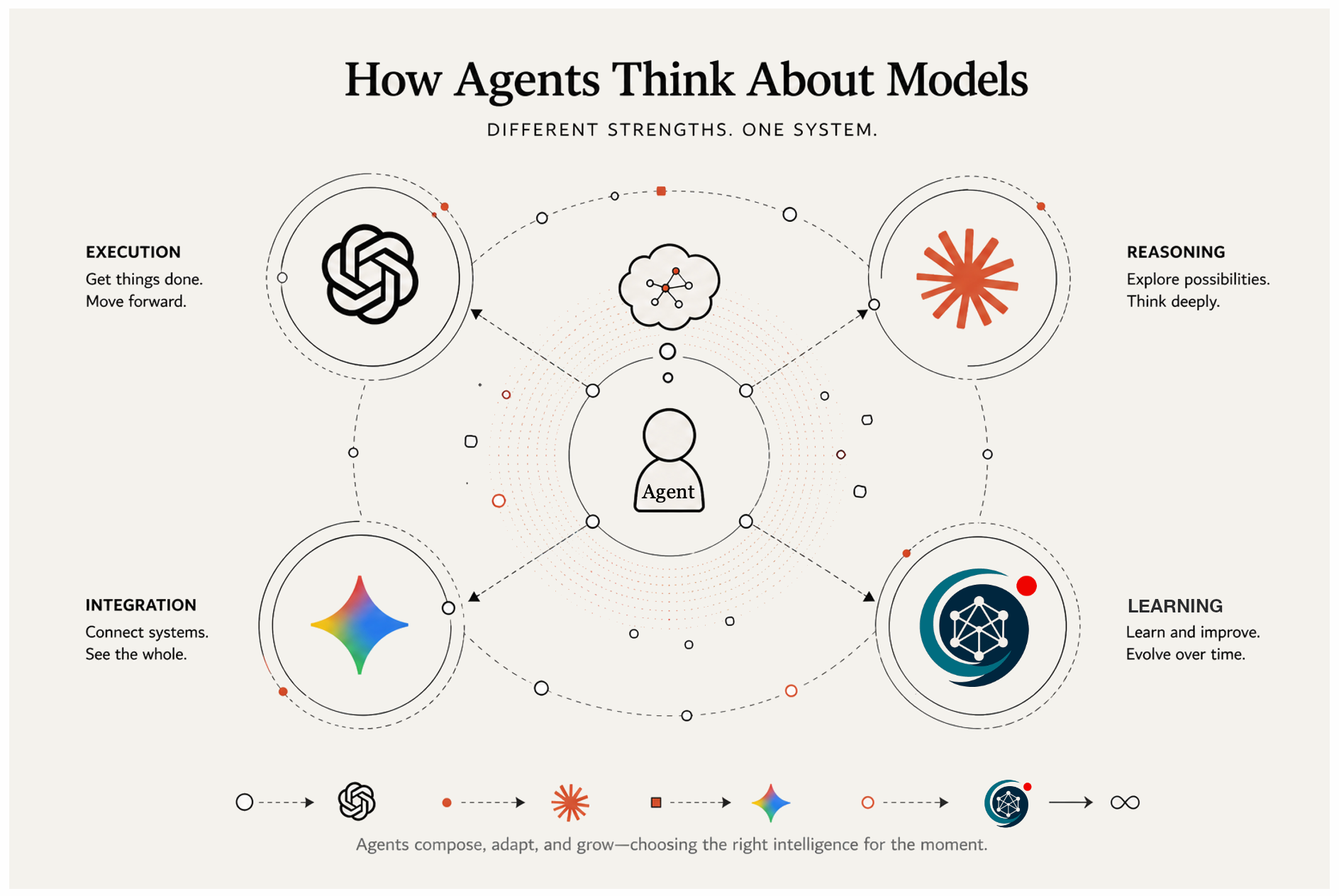

Today, we can already see three distinct philosophies emerging across the major players:

OpenAI tends to emphasize execution.

Anthropic tends to emphasize reasoning.

Gemini (Google) tends to emphasize integration and systems.

These are not marketing distinctions. They show up in how the models behave under real workloads.

Execution focused models are designed to move forward. They take a task, produce an output, and can be run repeatedly in a loop. They are useful when the goal is to get something done end to end with minimal friction. Reasoning focused models are designed to think more deeply. They break problems apart, explore edge cases, and often ask for clarification. They are useful when the goal is to understand before acting. Integration/System focused models are designed to operate within larger environments. They integrate with tools, data sources, and multimodal inputs. They are useful when the goal is coordination across a broader context.

For a human user, these differences often come down to preference. Some developers want a model that feels like a collaborator. Others want a model that feels like an executor. Some want to stay inside an IDE. Others prefer a terminal. This feels similar to older debates such as VI versus Emacs versus IDE, or Tabs versus Spaces. Strong opinions form because the tools align with how people like to work.

AI Agents change that dynamic. An agent does not have a preference in the human sense. It has constraints. It has a structure. It has a loop that defines how it operates. Those constraints implicitly determine which model works best for it.

An agent that runs fast iterative loops will tend to prefer execution oriented models. It needs outputs that are actionable and consistent. It needs to be able to retry quickly and converge on a result. A model that pauses to reason too deeply or asks too many questions can slow that loop down. In that context, forward progress matters more than perfect understanding.

An agent that is responsible for planning or complex decision making will tend to prefer reasoning oriented models. It benefits from deeper analysis, from exploring alternatives, and from identifying edge cases before acting. In that context, slowing down to think can actually improve outcomes.

An agent that operates across multiple systems will tend to prefer models that are well integrated into those systems. It needs structured outputs, strong tool use, and the ability to coordinate across different data sources. In that context, connectivity and consistency across environments matter more than any single output.

What this suggests is that agents are not neutral consumers of models. Their architecture effectively selects the model that fits their mode of operation. In that sense, agents have preferences, even if those preferences are implicit.

This already changes how we think about the current landscape. Tools like Codex, Claude, and Cursor are often compared directly, but they are not simply competing on a single axis. They represent different approaches to how intelligence is used in the development process. One leans toward execution, one toward reasoning, and one toward keeping the developer in the loop through tight interaction. The choice between them is often a reflection of how much control the human wants to retain and how much they are willing to delegate.

The next shift is even more interesting. If agents become the primary builders of software and workflows, the question changes again. It is no longer just which model they prefer, but which models they can teach, evolve, and make their own.

This introduces a new requirement. It is not enough for a model to be capable. It must be adaptable. An agent that runs continuously in a production loop will encounter patterns. It will see which outputs work and which do not. It will develop a sense of what good looks like for its specific tasks. If the model remains static, that learning is lost. The agent has to work around the model rather than improve it.

A different kind of system emerges when the model can be shaped by the agent itself. In that system, the agent refines the model over time. It adjusts behavior, improves outputs, and internalizes workflows. The boundary between using intelligence and producing intelligence starts to blur.

An agent that can adapt its model over time, refine its outputs, and internalize its own workflows becomes something fundamentally different. It is no longer just a user of intelligence. It becomes a producer of it. This is where the idea of agent teachable models becomes important. Instead of training models once and deploying them broadly, we start to see models that evolve in context. They are influenced by the agents that use them. They become specialized to the workflows they support. They improve not through centralized training alone, but through continuous interaction in real environments.

—

In practice, this likely leads to combinations rather than a single winning model. An advanced agent may rely on an execution layer to carry out tasks, a reasoning layer to plan and evaluate, and a system layer to integrate with the broader environment. On top of that, it may use an adaptive layer that captures what it has learned and feeds that back into its behavior.

This layered approach reflects the reality that no single model philosophy is sufficient on its own. Execution without reasoning can lead to errors. Reasoning without execution can lead to stagnation. Integration without adaptation can lead to rigidity. The agents that perform best will be the ones that can balance these capabilities and evolve over time.

The implication is that the future is not defined by one model winning. It is defined by how agents compose, select, and ultimately shape the models they depend on. The center of gravity shifts from the model itself to the system that uses it.

We are still early in this transition. Most models are still trained and updated in relatively static ways. Most agents are still constrained by the behavior of the models they call. But the direction is clear. As agents become more capable and more autonomous, the demand for models that can be adapted in context will increase.

We used to choose tools based on how we think. Now we are starting to build systems that choose tools based on how they think. The next step is building systems that improve those tools as they go… That is a very different model of intelligence.